Whitepaper, March 2026

Guardrails Stop Mistakes. Trust Infrastructure Prevents Them.

The Enterprise Case for AI Trust Infrastructure

As enterprises accelerate AI adoption in regulated, high-stakes environments, a critical assumption has been that deploying guardrails is the same as trusting AI. It is not.

Chief Risk Officers

Compliance Leaders

Technology Executives

01

The World Has Changed

AI Is Everywhere. Trust Is Not.

Enterprises aren't just experimenting with AI, they're trusting it to deny loans, approve claims, route customers, flag fraud, and automate decisions that affect real people at scale.

83%

of AI failures are decision failures, not output violations

1 in 4

regulated AI deployments face audit readiness gaps

0%

of guardrail vendors provide full decision lineage

"The question is no longer whether your AI behaved. The question is whether it was allowed to decide, and whether you can prove it."

02

The Guardrail Illusion

Guardrails Moderate Behavior.

They Don't Make Decisions Trustworthy.

Guardrails are valuable tools. They prevent harmful outputs, enforce content policies, and constrain model behavior at the interaction layer. But guardrails are structurally incomplete, they operate at the surface, inspecting messages, filtering outputs, applying rules. They are stateless, session-scoped, and interaction-bound.

Real Scenario: Financial Services

Case Study

An AI lending system denies a loan application. The output is clean: no toxic language, no policy violation. Guardrails pass it through without a flag. But the denial was driven by training data with historical bias. Input features came from stale CRM records. The model version was never validated for this product line. No guardrail in the world would have caught any of this.

03

The Real Risk Stack

Enterprise AI Carries 6 Distinct Risk Categories

✓ Covered

Output Risk

AI says something harmful, off-policy, or toxic

✗ Not Covered

Model Risk

Wrong model version used; out-of-scope application

✗ Not Covered

Data Risk

Stale, biased, or corrupted inputs drive decisions

✗ Not Covered

Decision Risk

Legally permissible but factually wrong or unfair

✗ Not Covered

Context Risk

Agent uses poisoned context or wrong retrieval

✗ Not Covered

Governance Risk

Cannot prove authority, policy, or

legitimacy

04

Three Lines of Defense

Guardrails Live in the First Line.

Enterprise AI Risk Doesn't.

1st Line

Guardrails Gap

Local controls only. No traceability or systemic integrity.

Trust Infrastructure

Decision context tracking. Agent authority governance. Failure diagnostics.

2nd Line

Guardrails Gap

Cannot answer: which model? Which data? Was it approved?

Trust Infrastructure

Decision provenance. Model lineage. Policy application audit trail.

3rd Line

Guardrails Gap

No evidence chain. Cannot reconstruct decisions under scrutiny.

Trust Infrastructure

Forensic-grade decision reconstruction. Audit-ready lineage artifacts.

05

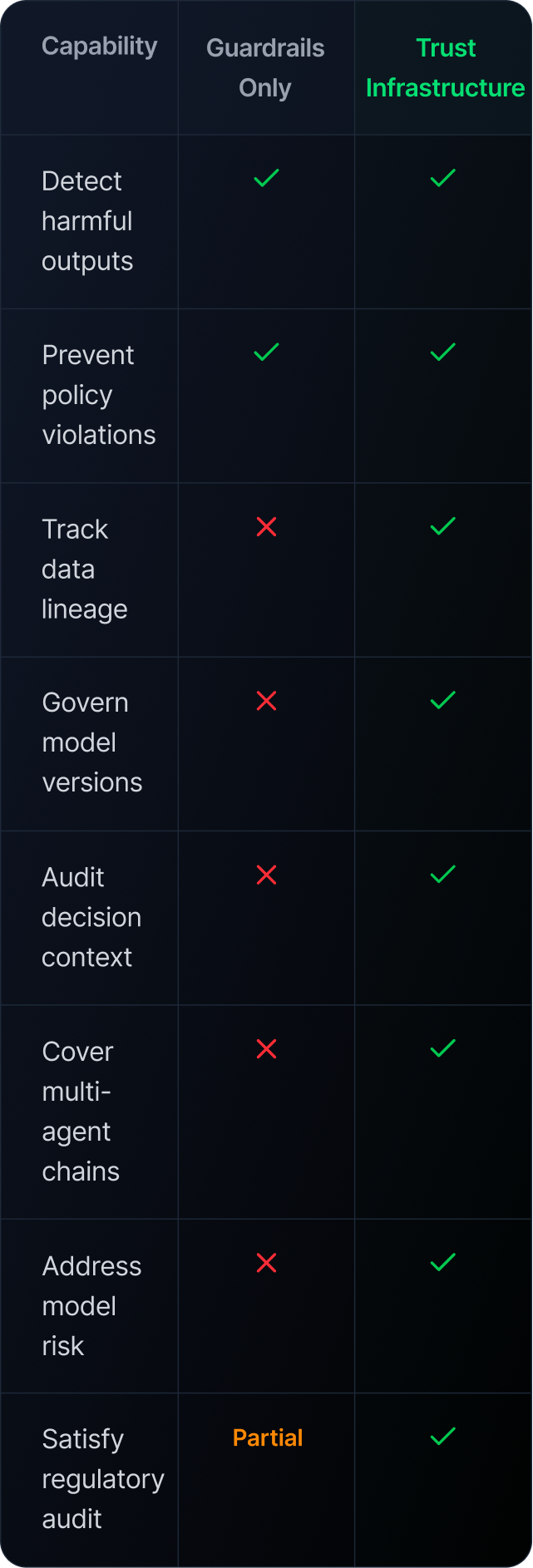

Capability Comparison

What Trust Infrastructure Adds

06

The Trust Imperative

5 Questions Every Regulated Enterprise Should Be Asking

01

Can you trace every AI decision back to its data source, model version, and policy?

02

If a regulator requests audit evidence for an AI-driven outcome from 90 days ago, can you reconstruct it?

03

Do your second-line risk and compliance teams have real visibility into how AI decisions are made?

04

When an AI agent calls a tool, queries a database, or triggers a workflow, who authorized that action?

05

If your AI system produces a discriminatory or erroneous decision, can you determine root cause within hours?

AI Trust Is Entering Its Next Phase

Phase 1

Behavior Control

Guardrails

Filters

Output Safety

Phase 2

Decision Trust

Lineage

Data Quality

Defensibility

"Guardrails tell you the AI behaved. Trust infrastructure tells you the AI was right to act. In regulated industries, only one of those answers satisfies an auditor."

Explore AI Trust Infrastructure

Learn how Arhasi's Integrity-First AI can help your enterprise

Get Started

Whitepaper, March 2026

Guardrails Stop Mistakes. Trust Infrastructure Prevents Them.

The Enterprise Case for AI Trust Infrastructure

As enterprises accelerate AI adoption in regulated, high-stakes environments, a critical assumption has been that deploying guardrails is the same as trusting AI. It is not.

Chief Risk Officers

Compliance Leaders

Technology Executives

01

The World Has Changed

AI Is Everywhere. Trust Is Not.

Enterprises aren't just experimenting with AI, they're trusting it to deny loans, approve claims, route customers, flag fraud, and automate decisions that affect real people at scale.

83%

of AI failures are decision failures, not output violations

1 in 4

regulated AI deployments face audit readiness gaps

0%

of guardrail vendors provide full decision lineage

"The question is no longer whether your AI behaved. The question is whether it was allowed to decide, and whether you can prove it."

02

The Guardrail Illusion

Guardrails Moderate Behavior.

They Don't Make Decisions Trustworthy.

Guardrails are valuable tools. They prevent harmful outputs, enforce content policies, and constrain model behavior at the interaction layer. But guardrails are structurally incomplete, they operate at the surface, inspecting messages, filtering outputs, applying rules. They are stateless, session-scoped, and interaction-bound.

Real Scenario: Financial Services

Case Study

An AI lending system denies a loan application. The output is clean: no toxic language, no policy violation. Guardrails pass it through without a flag. But the denial was driven by training data with historical bias. Input features came from stale CRM records. The model version was never validated for this product line. No guardrail in the world would have caught any of this.

03

The Real Risk Stack

Enterprise AI Carries 6 Distinct Risk Categories

✓ Covered

Output Risk

AI says something harmful, off-policy, or toxic

✗ Not Covered

Model Risk

Wrong model version used; out-of-scope application

✗ Not Covered

Data Risk

Stale, biased, or corrupted inputs drive decisions

✗ Not Covered

Decision Risk

Legally permissible but factually wrong or unfair

✗ Not Covered

Context Risk

Agent uses poisoned context or wrong retrieval

✗ Not Covered

Governance Risk

Cannot prove authority, policy, or

legitimacy

04

Three Lines of Defense

Guardrails Live in the First Line.

Enterprise AI Risk Doesn't.

1st Line

Guardrails Gap

Local controls only. No traceability or systemic integrity.

Trust Infrastructure

Decision context tracking. Agent authority trust. Failure diagnostics.

2nd Line

Guardrails Gap

Cannot answer: which model? Which data? Was it approved?

Trust Infrastructure

Decision provenance. Model lineage. Policy application audit trail.

3rd Line

Guardrails Gap

No evidence chain. Cannot reconstruct decisions under scrutiny.

Trust Infrastructure

Forensic-grade decision reconstruction. Audit-ready lineage artifacts.

05

Capability Comparison

What Trust Infrastructure Adds

06

The Trust Imperative

5 Questions Every Regulated Enterprise Should Be Asking

01

Can you trace every AI decision back to its data source, model version, and policy?

02

If a regulator requests audit evidence for an AI-driven outcome from 90 days ago, can you reconstruct it?

03

Do your second-line risk and compliance teams have real visibility into how AI decisions are made?

04

When an AI agent calls a tool, queries a database, or triggers a workflow, who authorized that action?

05

If your AI system produces a discriminatory or erroneous decision, can you determine root cause within hours?

AI Trust Is Entering Its Next Phase

Phase 1

Behavior Control

Guardrails

Filters

Output Safety

Phase 2

Decision Trust

Lineage

Data Quality

Defensibility

"Guardrails tell you the AI behaved. Trust infrastructure tells you the AI was right to act. In regulated industries, only one of those answers satisfies an auditor."

Explore AI Trust Infrastructure

Learn how Arhasi's Integrity-First AI can help your enterprise

Get Started

Whitepaper, March 2026

Guardrails Stop Mistakes. Trust Infrastructure Prevents Them.

The Enterprise Case for AI Trust Infrastructure

As enterprises accelerate AI adoption in regulated, high-stakes environments, a critical assumption has been that deploying guardrails is the same as trusting AI. It is not.

Chief Risk Officers

Compliance Leaders

Technology Executives

01

The World Has Changed

AI Is Everywhere. Trust Is Not.

Enterprises aren't just experimenting with AI, they're trusting it to deny loans, approve claims, route customers, flag fraud, and automate decisions that affect real people at scale.

83%

of AI failures are decision failures, not output violations

1 in 4

regulated AI deployments face audit readiness gaps

0%

of guardrail vendors provide full decision lineage

"The question is no longer whether your AI behaved. The question is whether it was allowed to decide, and whether you can prove it."

02

The Guardrail Illusion

Guardrails Moderate Behavior.

They Don't Make Decisions Trustworthy.

Guardrails are valuable tools. They prevent harmful outputs, enforce content policies, and constrain model behavior at the interaction layer. But guardrails are structurally incomplete, they operate at the surface, inspecting messages, filtering outputs, applying rules. They are stateless, session-scoped, and interaction-bound.

Real Scenario: Financial Services

Case Study

An AI lending system denies a loan application. The output is clean: no toxic language, no policy violation. Guardrails pass it through without a flag. But the denial was driven by training data with historical bias. Input features came from stale CRM records. The model version was never validated for this product line. No guardrail in the world would have caught any of this.

03

The Real Risk Stack

Enterprise AI Carries 6 Distinct Risk Categories

✓ Covered

Output Risk

AI says something harmful, off-policy, or toxic

✗ Not Covered

Model Risk

Wrong model version used; out-of-scope application

✗ Not Covered

Data Risk

Stale, biased, or corrupted inputs drive decisions

✗ Not Covered

Decision Risk

Legally permissible but factually wrong or unfair

✗ Not Covered

Context Risk

Agent uses poisoned context or wrong retrieval

✗ Not Covered

Governance Risk

Cannot prove authority, policy, or

legitimacy

04

Three Lines of Defense

Guardrails Live in the First Line.

Enterprise AI Risk Doesn't.

1st Line

Guardrails Gap

Local controls only. No traceability or systemic integrity.

Trust Infrastructure

Decision context tracking. Agent authority trust. Failure diagnostics.

2nd Line

Guardrails Gap

Cannot answer: which model? Which data? Was it approved?

Trust Infrastructure

Decision provenance. Model lineage. Policy application audit trail.

3rd Line

Guardrails Gap

No evidence chain. Cannot reconstruct decisions under scrutiny.

Trust Infrastructure

Forensic-grade decision reconstruction. Audit-ready lineage artifacts.

05

Capability Comparison

What Trust Infrastructure Adds

06

The Trust Imperative

5 Questions Every Regulated Enterprise Should Be Asking

01

Can you trace every AI decision back to its data source, model version, and policy?

02

If a regulator requests audit evidence for an AI-driven outcome from 90 days ago, can you reconstruct it?

03

Do your second-line risk and compliance teams have real visibility into how AI decisions are made?

04

When an AI agent calls a tool, queries a database, or triggers a workflow, who authorized that action?

05

If your AI system produces a discriminatory or erroneous decision, can you determine root cause within hours?

AI Trust Is Entering Its Next Phase

Phase 1

Behavior Control

Guardrails

Filters

Output Safety

Phase 2

Decision Trust

Lineage

Data Quality

Defensibility

"Guardrails tell you the AI behaved. Trust infrastructure tells you the AI was right to act. In regulated industries, only one of those answers satisfies an auditor."

Explore AI Trust Infrastructure

Learn how Arhasi's Integrity-First AI can help your enterprise

Get Started